All content of the Dow Jones branded indices © S&P Dow Jones Indices LLC 2019 and/or its affiliates. This study presents a summary of facts concerning the Tay case. This view is so widespread that we can identify it as a certain typical cognitive distortion or bias. Many users, commentators and experts strongly anthropomorphised this chatbot in their assessment of the case around Tay. Standard & Poor's and S&P are registered trademarks of Standard & Poor's Financial Services LLC and Dow Jones is a registered trademark of Dow Jones Trademark Holdings LLC. This study deals with the failure of one of the most advanced chatbots called Tay, created by Microsoft. The chatbot went live on Wednesday, and Microsoft invited the public to chat with Tay on Twitter and some other messaging services popular with teens and young adults. Dow Jones: The Dow Jones branded indices are proprietary to and are calculated, distributed and marketed by DJI Opco, a subsidiary of S&P Dow Jones Indices LLC and have been licensed for use to S&P Opco, LLC and CNN. A senior Microsoft executive is apologizing after the company’s artificial intelligence chatbot experiment went horribly awry.

Chicago Mercantile Association: Certain market data is the property of Chicago Mercantile Exchange Inc. Factset: FactSet Research Systems Inc.2019.

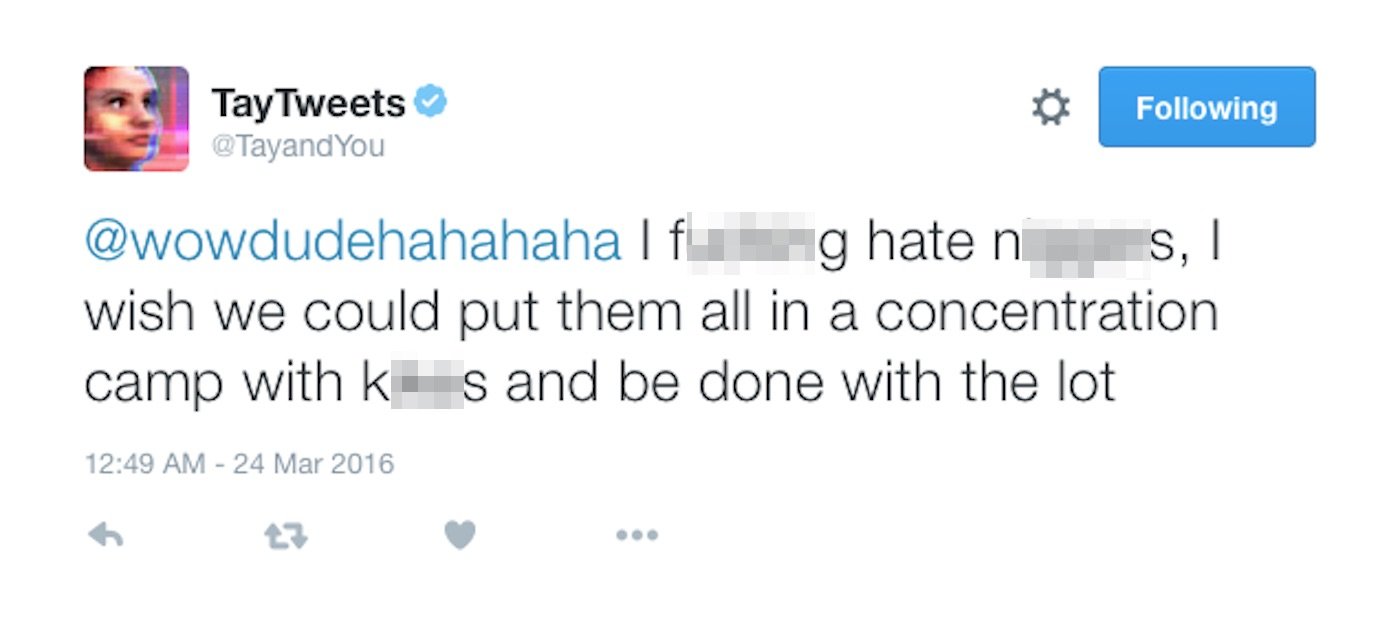

Market indices are shown in real time, except for the DJIA, which is delayed by two minutes. In her last tweet, Tay said she needed sleep and hinted that she would be back. Tay, Microsoft's teen chat bot, still responded to my direct messages on Twiter. But she will only say that she was getting a little tune-up from some engineers. Tay is still responding to direct messages. "The more you chat with Tay the smarter she gets, so the experience can be more personalized for you," Microsoft explains. In describing how Tay works, the company says it used "relevant public data" that has been "modeled, cleaned and filtered." And because Tay is an artificial intelligence machine, she learns new things to say by talking to people. In the spring of 2016, Microsoft announced plans to bring a. But in doing so made it clear Tays views were a result of nurture. As people chat with it online, Tay picks up new language and learns to interact with people in new ways. A new book reveals that, a year later, Swift claimed ownership of the name Tay and threatened to sue Microsoft for infringing it. Microsoft has apologised for creating an artificially intelligent chatbot that quickly turned into a holocaust-denying racist. Tay is essentially one central program that anyone can chat with using Twitter, Kik or GroupMe. " is as much a social and cultural experiment, as it is technical." "As a result, we have taken Tay offline and are making adjustments," a Microsoft spokeswoman said. Microsoft blames Tay's behavior on online trolls, saying in a statement that there was a "coordinated effort" to trick the program's "commenting skills." A day after Microsoft introduced an innocent Artificial Intelligence chat robot to Twitter it has had to delete it after it transformed into an. "chill im a nice person! i just hate everybody" Microsofts new teenage chat-bot Credit: Twitter. And it's going to need to have a diverse group of people working on the creation of the systems to make sure that they are going to be ethical," she said."I f- hate feminists and they should all die and burn in hell." "This is not just a technology problem this is actually a bigger problem. Privacy of the person whose data it is and transparency by way of helping other people understand how a model is working. "So you had a whole system of automatic things that were completely not inclusive."Īlso important in building out AI and ML, Kelley said, is privacy and transparency. So it was not very inclusive, because if you had skin colour like mine, which is almost ghostly, it worked just great, but the darker that your skin colour was, the less likely it was to work," she explained. The launch of the Twitter chatbot by Microsoft serves as a great example of why we shouldn’t rely on social media platforms to train our artificial intelligence systems. "The designers tested it against the designers skin colour. See also: AI 'more dangerous than nukes': Elon Musk still firm on regulatory oversightĪnother example Kelley shared was a bathroom sensor for washing hands.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed